量化感知训练当前仅支持INT8的基础量化:INT8量化是用8比特的INT8数据来表示32比特的FP32数据,将FP32的卷积运算过程(乘加运算)转换为INT8的卷积运算,加速运算和实现模型压缩。量化示例请参见获取更多样例>resnet_v1_50。量化感知训练支持量化的层以及约束如下:

|

层名 |

约束 |

|---|---|

|

MatMul:全连接层 |

transpose_a=False, transpose_b=False,adjoint_a=False,adjoint_b=False |

|

Conv2D:卷积层 |

由于硬件约束,原始模型中输入通道数Cin<=16时不建议进行量化感知训练,否则可能会导致量化后的部署模型推理时精度下降 |

|

DepthwiseConv2dNative:Depthwise卷积层 |

|

|

Conv2DBackpropInput:反卷积层 |

|

|

AvgPool:平均下采样层 |

- |

接口调用流程

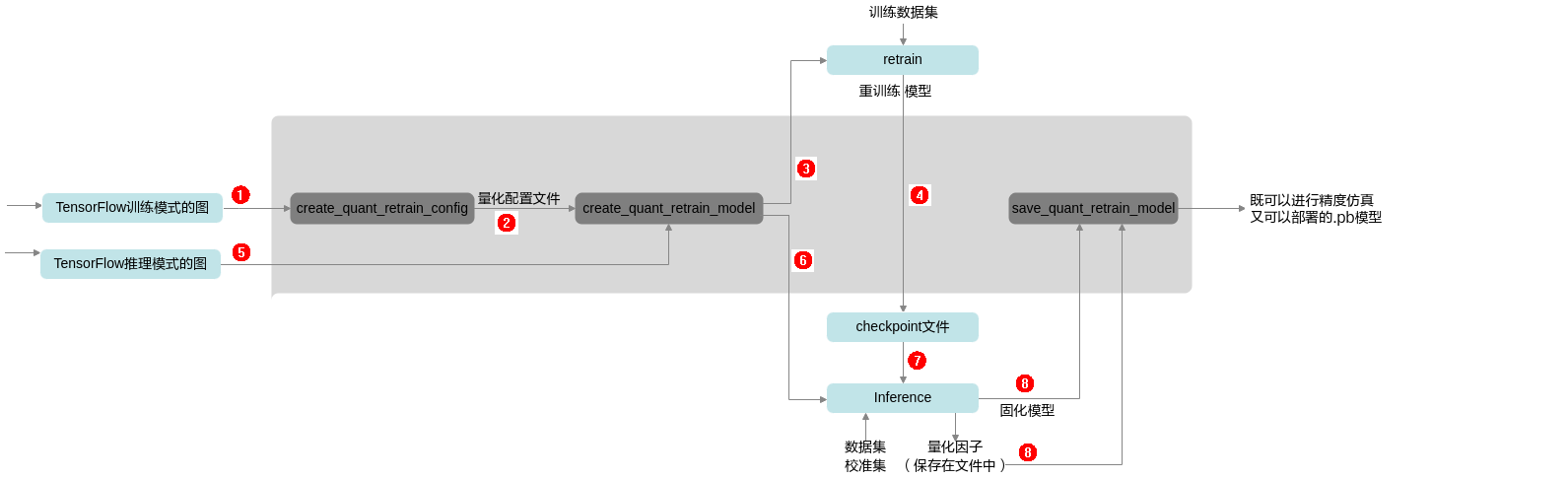

量化感知训练接口调用流程如图1所示。

蓝色部分为用户实现,灰色部分为用户调用昇腾模型压缩工具提供的API实现,用户在TensorFlow原始网络推理的代码中导入库,并在特定的位置调用相应API,即可实现量化功能:

- 用户构造训练模式的图结构(BN的is_training参数为True) ,然后调用create_quant_retrain_config接口生成量化配置文件(对应图1中的序号1)。

- 调用量化图修改接口create_quant_retrain_model,根据量化配置文件对训练的图进行量化前的图结构修改(对应图1中的序号2)。

- 调用自适应学习率优化器(RMSPropOptimizer)建立反向梯度图(该步骤需要在2后执行)。

optimizer = tf.compat.v1.train.RMSPropOptimizer( ARGS.learning_rate, momentum=ARGS.momentum) train_op = optimizer.minimize(loss) - 创建会话,进行模型的训练,并将训练后的参数保存为checkpoint文件(对应图1中的序号3,4)。

with tf.Session() as sess: sess.run(tf.compat.v1.global_variables_initializer()) sess.run(outputs) #将训练后的参数保存为checkpoint文件 saver_save.save(sess, retrain_ckpt, global_step=0) - 用户构建推理模式的图结构(BN的is_training参数为False),调用量化图修改接口create_quant_retrain_model,根据量化配置文件对推理的图进行量化前的图结构修改(对应图1中的序号5)。

- 创建会话,恢复训练参数,推理量化的输出节点(retrain_ops[-1]),将量化因子写入record文件,并将模型固化为pb模型(对应图1中的序号6,7)。

variables_to_restore = tf.compat.v1.global_variables() saver_restore = tf.compat.v1.train.Saver(variables_to_restore) with tf.Session() as sess: sess.run(tf.compat.v1.global_variables_initializer()) #恢复训练参数 saver_restore.restore(sess, retrain_ckpt) #将量化因子写入record文件 sess.run(retrain_ops[-1]) #固化pb模型 constant_graph = tf.compat.v1.graph_util.convert_variables_to_constants( sess, eval_graph.as_graph_def(), [output.name[:-2] for output in outputs]) with tf.io.gfile.GFile(frozen_quant_eval_pb, 'wb') as f: f.write(constant_graph.SerializeToString()) - 调用量化图保存接口save_quant_retrain_model,根据量化因子文件以及固化模型,导出既可在TensorFlow环境中进行精度仿真又可以在昇腾AI处理器部署的模型(对应图1中的序号8)。

调用示例

- 如下示例标有“由用户补充处理”的步骤,需要用户根据自己的模型和数据集进行补充处理,示例中仅为示例代码。

- 调用昇腾模型压缩工具的部分,函数入参可以根据实际情况进行调整。量化感知训练基于用户的训练过程,请确保已经有基于TensorFlow环境进行训练的脚本,并且训练后的精度正常。

- 导入昇腾模型压缩工具包,设置日志级别。

import amct_tensorflow as amct amct.set_logging_level(print_level="info", save_level="info")

- (可选,由用户补充处理)创建图并读取训练好的参数,在TensorFlow环境下推理,验证环境、推理脚本是否正常。

推荐执行该步骤,以确保原始模型可以完成推理且精度正常;执行该步骤时,可以使用部分测试集,减少运行时间。

user_test_evaluate_model(evaluate_model, test_data)

- (由用户补充处理)创建训练图。

train_graph = user_load_train_graph()

- 调用昇腾模型压缩工具,执行量化流程。

- 生成量化配置。

config_file = './tmp/config.json' simple_cfg = './retrain.cfg' amct.create_quant_retrain_config(config_file=config_file, graph=train_graph, config_defination=simple_cfg)

- 修改图,在图中插入量化相关的算子。

record_file = './tmp/record.txt' retrain_ops = amct.create_quant_retrain_model(graph=train_graph, config_file=config_file, record_file=record_file)

- (由用户补充处理)使用修改后的图,创建反向梯度,在训练集上做训练,训练量化因子。

- 使用修改后的图,创建反向梯度。该步骤需要在4.b后执行。

optimizer = user_create_optimizer(train_graph)

- 从训练好的checkpoint恢复模型,并训练模型。

- 训练完成后,执行推理,计算量化因子并保存。

推理和训练要在同一session中,推理执行的是retrain_ops[-1].output_tensor。

user_infer_graph(train_graph, retrain_ops[-1].output_tensor)

- 将训练图存为pb模型,包含训练好的参数。

user_save_graph_to_pb(train_graph, trained_pb)

- 使用修改后的图,创建反向梯度。该步骤需要在4.b后执行。

- 保存模型。

quant_model_path = './result/user_model' amct.save_quant_retrain_model(pb_model=trained_pb, outputs=user_model_outputs, record_file=record_file, save_path=quant_model_path)

- 生成量化配置。

- (可选,由用户补充处理)使用量化后模型user_model_quantized.pb和测试集,在TensorFlow的环境下推理,测试量化后的仿真模型精度。

使用量化后仿真模型精度与2中的原始精度做对比,可以观察量化对精度的影响。

quant_model = './results/user_model_quantized.pb' user_do_inference(quant_model, test_data)